Consulting, case-study pilots, training, and implementation for auditable scientific AI.

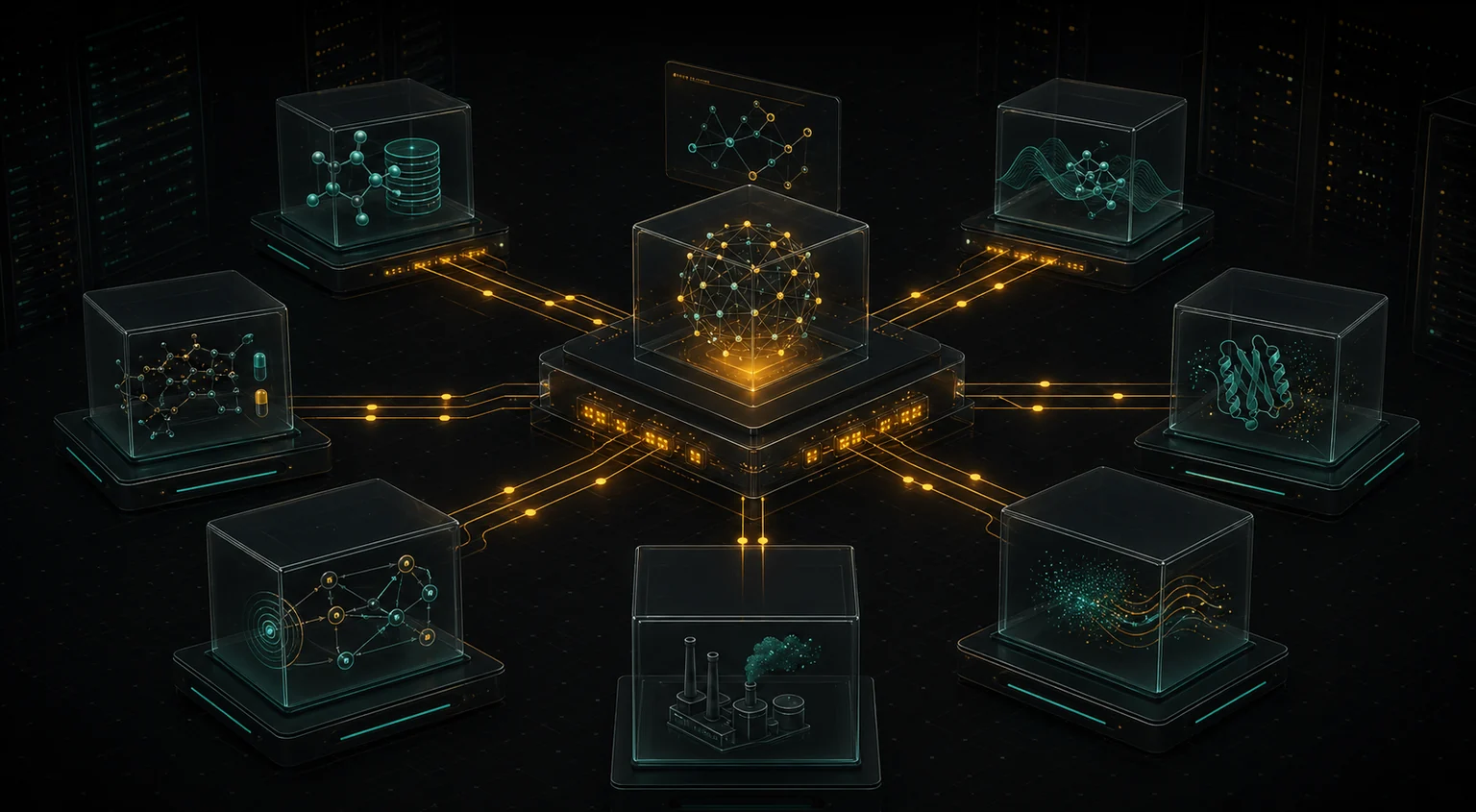

in4r helps teams move from AI curiosity to working infrastructure: advisory support, paid case-study pilots, team training, scientific hackathons, custom MCP implementation, and governance for review-heavy workflows, including infrastructure around NAM-derived evidence, model integration, and expert-review workflows.

in4r provides software architecture, workflow infrastructure, training, and decision-support tooling. We do not provide certified regulatory risk assessments, regulatory approvals, or final safety decisions. Scientific interpretation, regulatory judgment, and accountable use remain with the client or responsible expert team. in4r is an independent company and is not an official VHP4Safety platform or consortium service unless explicitly stated. References to VHP4Safety, O-QT, ToxMCP, publications, or public repositories are provided for provenance and scientific context and do not imply institutional endorsement unless explicitly stated.

Build a case study together, then decide what should become infrastructure.

in4r works with a small number of teams as a hands-on consulting and implementation partner. A paid case-study pilot gives us a concrete workflow to improve now, while reusable pieces can become better documented open infrastructure over time.

Discuss a case-study pilotScientific AI consulting

Advisory support for teams deciding how to use AI in toxicology, chemical safety, biotech/R&D, or evidence-review workflows without losing accountability.

Case-study pilot

A focused engagement to build one credible toxicology, chemical safety, or R&D case study with representative inputs, review gates, and an inspectable output.

MCP implementation partner

Hands-on development of custom MCP servers, tool wrappers, evidence pipelines, and agentic applications around your data, tools, and SOPs.

Open-source sponsorship

Fund public ToxMCP modules and documentation while steering work toward real safety-science use cases your team cares about.

AI training, workshops & hackathons

Practical sessions for scientific teams: MCPs, agentic workflows, evaluation patterns, review gates, and hands-on case-study sprints around your domain.

Start with consulting or a case-study pilot, then build what proves useful.

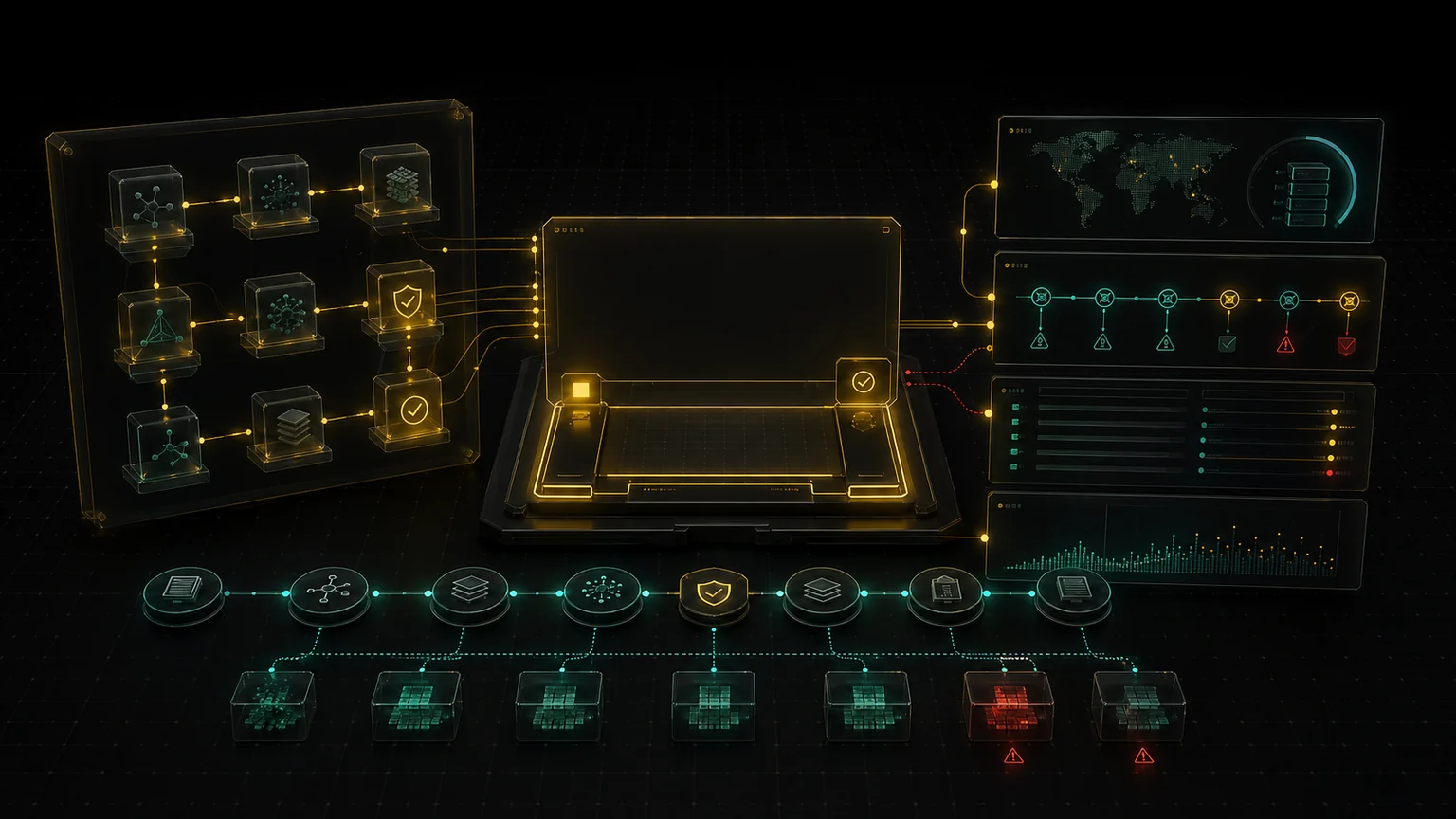

Assess & Blueprint

Map one high-value scientific workflow, identify evidence gaps, and define the pilot boundary before anything is automated.

Explore Assessment

Pilot & Validate

Build one focused case study, test it on representative inputs, and decide what should scale with evidence in hand.

Explore Pilot

Build & Integrate

Develop MCP-native workflow infrastructure around your tools, data sources, SOPs, and human review process.

Explore Build

Governance & Enablement

Turn the pilot into an operating model with audit procedures, review gates, training, and improvement loops.

Explore GovernanceMCPs and Agentic Systems You Can Inspect

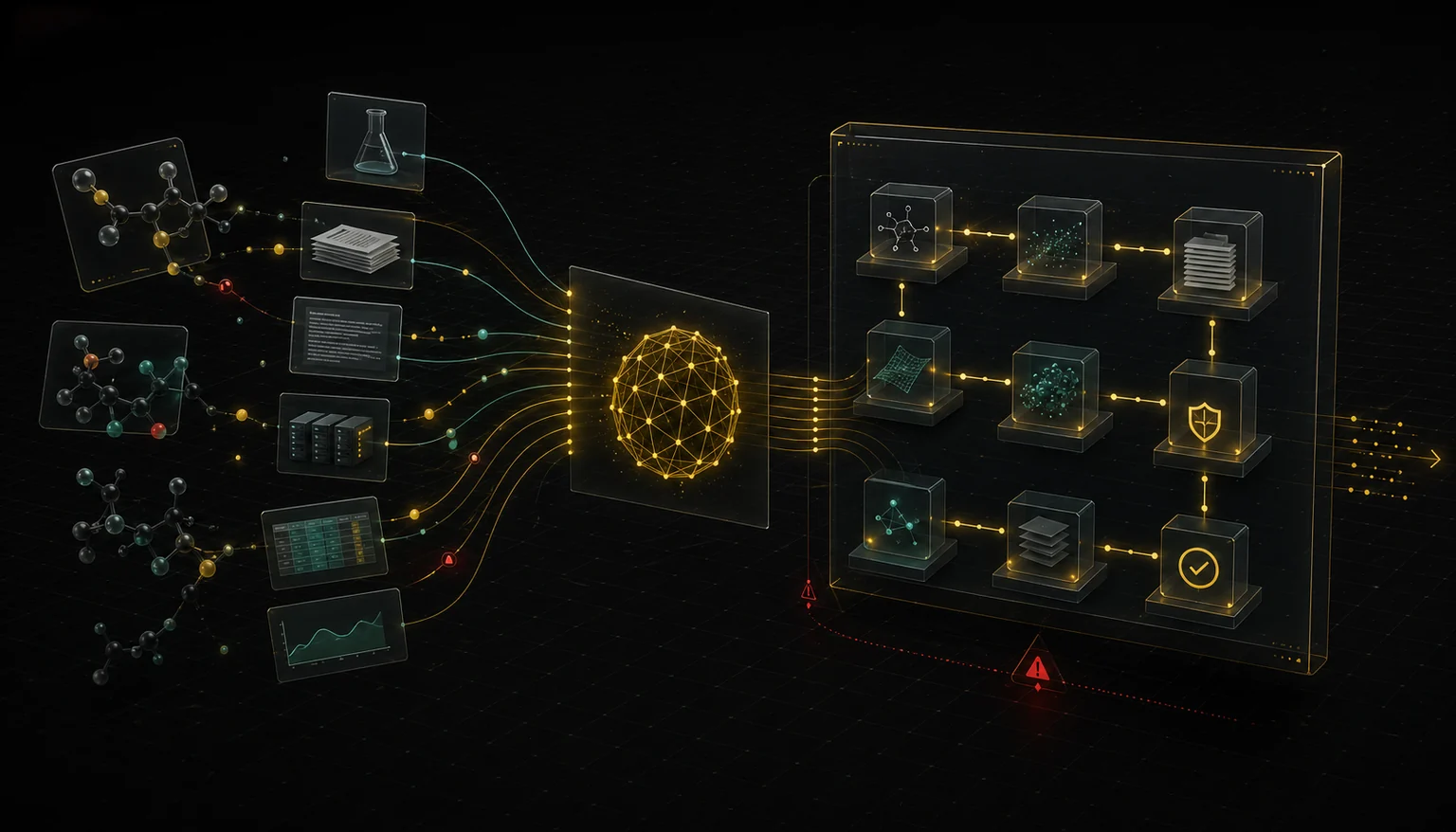

We do not sell generic AI. We build auditable infrastructure — custom MCP servers and multi-agent applications — where tool calls, source references, assumptions, and outputs are traceable, bounded, and reviewable.

Custom MCP Servers

We build guardrailed, auditable MCP servers that connect your scientific tools and data sources to AI agents through a standard, inspectable protocol. Every tool call is logged, bounded, and reviewable.

- MCP-native tool definitions

- Guardrailed inputs & outputs

- Full audit logging per call

- Pluggable across agent frameworks

Agentic Applications

We design and deploy multi-agent AI systems that support complex scientific workflows — from chemical analysis to hazard evidence retrieval and structuring — while keeping human experts in control at every critical review point.

- Multi-agent orchestration

- Human-in-the-loop checkpoints

- Structured, auditable outputs

- Evidence bundle generation

Auditable Workflow Integration

We integrate disparate data sources, models, and review processes into unified workflows where every transformation is logged, every claim is traceable, and every output is defensible.

- Multi-source data integration

- Provenance tracking

- Review gate design

- Reproducible execution

Build one credible case study, not a platform migration.

Toxicology teams already trust well-designed case studies. The best first pilot is narrow enough to validate quickly and important enough that auditability matters. We define the evidence sources, tool boundaries, review gates, and output artifact before automating anything.

One review workflow, one responsible expert group, one inspectable output, and a clear before/after comparison.

OECD QSAR and read-across dossier pilot

Connect Toolbox evidence, profiler alerts, analogue logic, and expert review into a dossier workflow with provenance attached to every claim.

Chemical safety evidence packet

Federate identity, hazard evidence, exposure context, bioactivity, and literature into a review-ready packet for expert review by the client scientific or regulatory team.

Exposure-to-PBPK handoff workflow

Turn product-use assumptions into deterministic external-dose scenarios and package them for downstream kinetic modeling or expert review.

Literature-to-evidence synthesis

Transform scattered papers, agency reports, and internal notes into a structured evidence map with conflicts, limitations, and source trails exposed.

Private tool and SOP integration

Wrap internal scripts, databases, SOPs, and review gates in MCP-native interfaces so agents can assist without bypassing governance.

Start with one case-study pilot or advisory retainer.

Bring one safety-science or research case study. We will define the pilot boundary, consulting scope, evidence sources, review gates, and deployment path before scaling anything. We are opening pilot and design-partner conversations for teams operationalizing AI in review-heavy scientific workflows.